What drives these types of visual search responses?

Our advanced Gemini models make AI mode possible, and its multimodal capabilities benefit from the visual expertise we’ve built into Lens over the years. When you search with an image, Gemini analyzes the image along with your question to decide which tools to use. Let’s say you’re scrolling on your phone and see an outfit on social media that you love. When searching for it, the model knows to use Lens to retrieve image results for the garment’s hat, shoes, and jacket simultaneously. It then weaves these individual results into one easy-to-read answer.

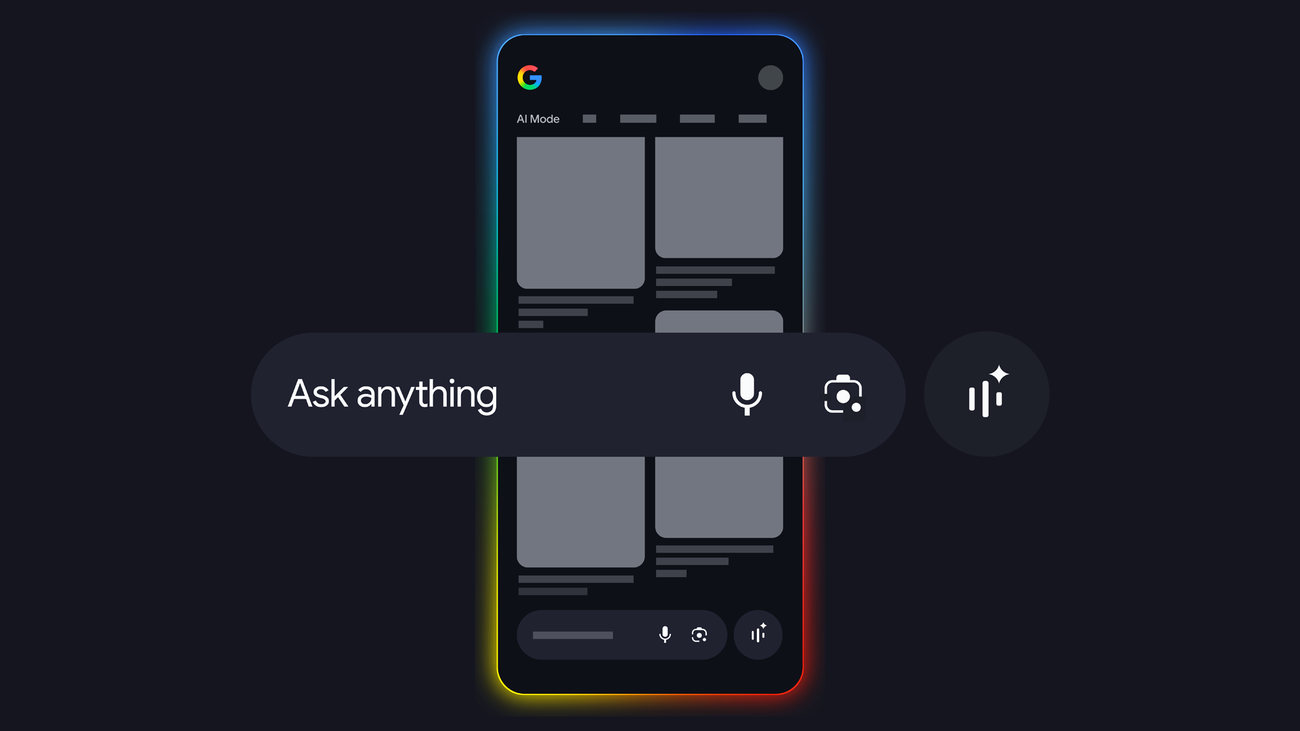

Think of it this way: the AI model acts as the “brain” that can “see” the image, while the visual search backend acts as the “library” containing billions of web results. AI performs multi-object reasoning to understand what you’re looking at. It then uses a “fan-out” technique that triggers multiple searches at once, reads through the results, and presents a single, coherent answer with helpful links—all in seconds.

Can you explain the fan-out technique?

AI mode basically does a dozen searches for you in the time it takes to do one. If you upload a photo of a garden you admire, you may have several questions: Will these plants survive in the shade? Are they right for my climate? How much maintenance do they need?

Before, you would ask them one by one. Now AI mode identifies all the necessary “fan-out” searches. In this way, it pulls together the care requirements for each plant in the image using helpful web results, breaks down the information and even suggests next steps you might want to take. As AI Mode reveals more visual results from a single search, it’s easier than ever to find just what you’re looking for and stumble upon something new that piques your interest.

Do you have to start with an image to get this kind of help in AI mode?

Not at all! You can start with a simple text search in AI mode, like “visual inspo for work clothes.” When you see a result you like, just say “Show me more options like the other skirt.” The system immediately takes the specific image and begins the fan-out process from there.

It certainly works great for shopping – what else can you use it for?

You can take a photo of a wall in a museum and ask for explanations of each painting. Or take a picture of a bakery window and ask what all the different cakes are. It’s about moving from “What is this one thing?” to “Explain this whole scene to me.”

Sounds like I have some photos to take and a lot more to discover. I’m in the process of trying these tools!